|

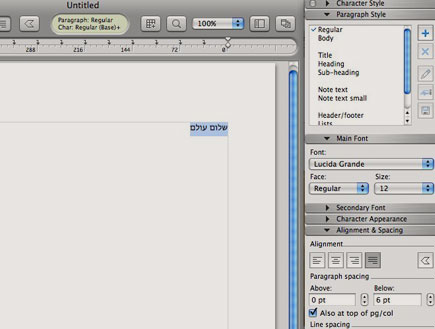

9/20/2023 0 Comments Mellel allignment problems

There was an interesting experiment in cognitive psychology where they asked the subjects, “Should this hospital administrator spend $1 million on a liver for a sick child, or spend it on general hospital salaries, upkeep, administration, and so on?”Ī lot of the subjects in the cognitive psychology experiment became very angry and wanted to punish the administrator for even thinking about the question. You have $1.2 million to spend, and you have to allocate that on $500,000 to maintain the MRI machine, $400,000 for an anesthetic monitor, $20,000 for surgical tools, $1 million for a sick child’s liver transplant … They cannot be circular.Īnother example: Suppose that you’re a hospital administrator. If you’re not going to spend a lot of money on Uber rides going in literal circles, we see that your preferences must be ordered. For example, suppose you state the following: “I prefer being in San Francisco to being in Berkeley, I prefer being in San Jose to being in San Francisco, and I prefer being in Berkeley to San Jose.” You will probably spend a lot of money on Uber rides going between these three cities. Utility functions arise when we have constraints on agent behavior that prevent them from being visibly stupid in certain ways. To begin with, I’d like to explain the truly basic reason why the three laws aren’t even on the table-and that is because they’re not a utility function, and what we need is a utility function. The classic initial stab at this was taken by Isaac Asimov with the Three Laws of Robotics, the first of which is: “A robot may not injure a human being or, through inaction, allow a human being to come to harm.”Īnd as Peter Norvig observed, the other laws don’t matter-because there will always be some tiny possibility that a human being could come to harm.Īrtificial Intelligence: A Modern Approach has a final chapter that asks, “Well, what if we succeed? What if the AI project actually works?” and observes, “We don’t want our robots to prevent a human from crossing the street because of the non-zero chance of harm.” Coherent decisions imply a utility function In this talk, I’m going to try to answer the frequently asked question, “Just what is it that you do all day long?” We are concerned with the theory of artificial intelligences that are advanced beyond the present day, and that make sufficiently high-quality decisions in the service of whatever goals they may have been programmed with to be objects of concern. Below, I’ve provided an abridged transcript of the talk, with some accompanying slides.ġ.1. In the talk, I introduce some open technical problems in AI alignment and discuss the bigger picture into which they fit, as well as what it’s like to work in this relatively new field.

You may also be interested in a shorter version of this talk I gave at NYU in October, “ Fundamental Difficulties in Aligning Advanced AI.” We have an approximately complete transcript of the talk and Q&A session here, slides here, and notes and references here. AI Alignment: Why It’s Hard, and Where to Startĭecem| Eliezer Yudkowsky | Analysis, Videoīack in May, I gave a talk at Stanford University for the Symbolic Systems Distinguished Speaker series, titled “ The AI Alignment Problem: Why It’s Hard, And Where To Start.” The video for this talk is now available on Youtube:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed